Biomedical engineering senior Ian Y. Kim wears a motion capture suit during an experiment in the Neuromuscular and Musculoskeletal Biomechanics Lab at UT Dallas.

New University of Texas at Dallas research shows the potential for detecting mental health disorders by analyzing the way a person moves.

Using 3D motion capture and machine-learning models, researchers were able to identify elevated depression and anxiety symptoms in subjects from the way they walked and got up from a chair. The findings, published online in the May issue of Gait & Posture, demonstrate the potential for developing wearable devices that one day could give users early warnings about their mental health.

“Our study showed that depression and anxiety can be identified from human movement,” said Dr. Gu Eon Kang, assistant professor of bioengineering in the Erik Jonsson School of Engineering and Computer Science. “Gait analysis could offer an objective method for evaluating mental health.”

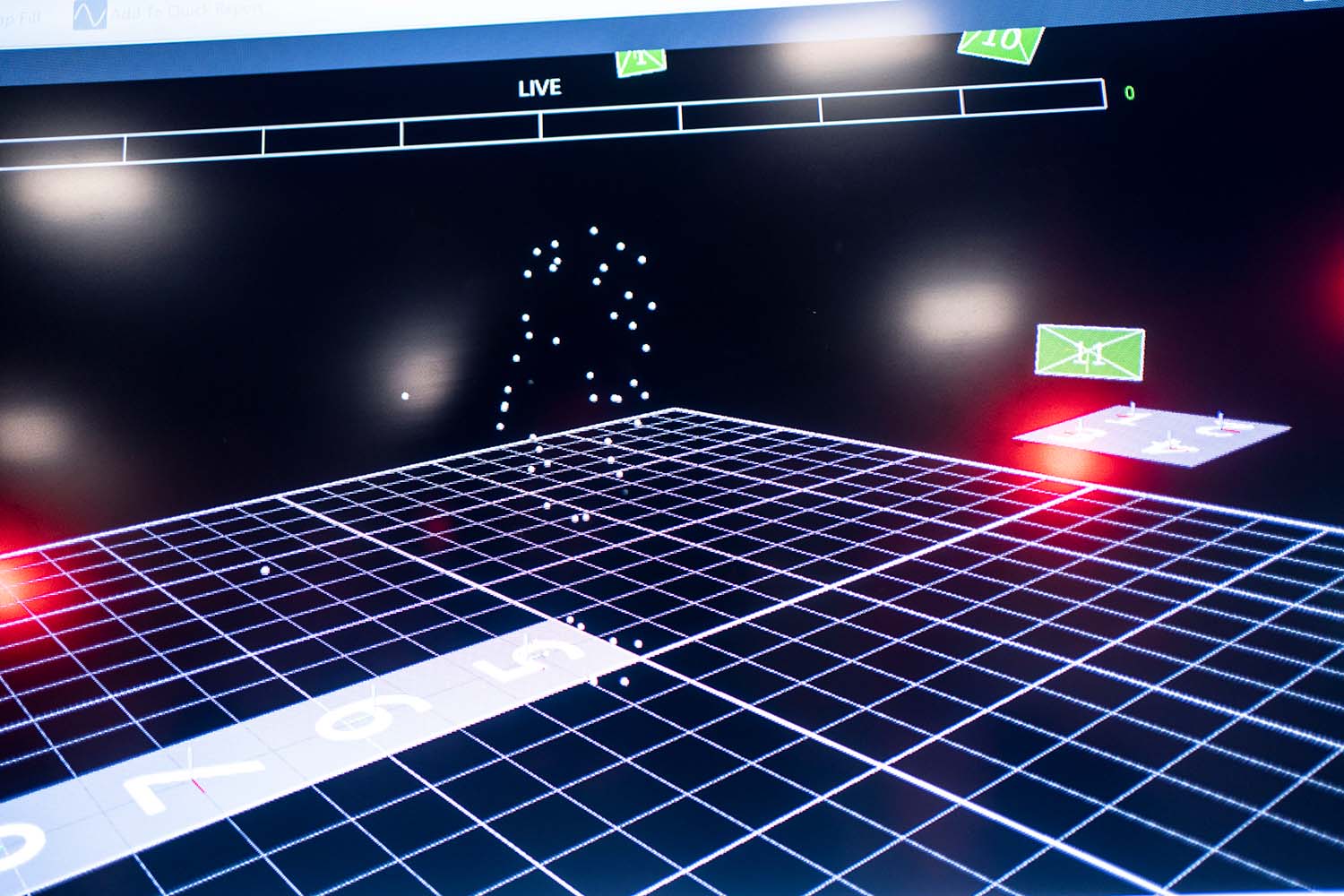

The computer screen shows the marker trajectory during a motion capture experiment.

Using gait analysis to identify mental health issues and emotional states is an emerging area of research. Kang cautioned, however, that wearable mental health-screening technology could serve as an indicator — not a replacement — for a professional diagnosis.

“If we can detect potential issues, people can seek treatment early and outcomes could be much better,” he said.

Kang said the research could have other applications such as helping animators better illustrate emotion.

Researchers in Kang’s Neuromuscular and Musculoskeletal Biomechanics Lab recruited 30 young adults for the study and assessed their levels of depression and anxiety using standardized questionnaires. Participants performed walking and sit-to-walk tasks while wearing a black form-fitting motion capture suit covered with 68 reflective markers. Their movements were recorded by a 16-camera motion capture system.

Researchers trained a machine-learning model using data from participants’ movements combined with information about whether they fell into higher- or lower-symptom groups for depression or anxiety, based on their questionnaires. Then, they asked the model to predict the mental state of other participants on whose data the system had not been trained.

The model correctly classified walking participants about 75% of the time, and using sit-to-walk tasks, 77% of the time.

Angeloh Stout BS’20, MS’23, a biomedical engineering doctoral student in Kang’s lab and a first author of the study, said that as a former high school student athlete, he became interested in the research after taking Kang’s biomechanics class. Stout, who helped set up Kang’s lab, said he was surprised by the changes in how people walked depending on their emotional state.

A researcher prepares the reflective markers that will be attached to the motion capture suit.

“You expect someone to walk slower when they’re sad. But what’s interesting to see is the different body responses that occur,” Stout said. “Students with higher depression and anxiety scores showed subtle but measurable differences from students with lower scores in how their joints moved along with greater hesitation during transitions like standing up to walk.”

Stout and other researchers in Kang’s lab published another recent study, in the February edition of Gait & Posture, that found a person’s gait also can provide insight into their emotional state.

In that study, participants were asked to recall memories to elicit five emotions: anger, sadness, joy, fear and a neutral state. Then, researchers captured their gait patterns in the lab. The model was about 59% accurate in distinguishing between emotional states and detected sadness with 66% accuracy.

While additional research with more subjects needs to be done to improve the method, Kang said the team’s study demonstrates the possibility for an objective measure of human emotion.

“Believe it or not, gait may be the most reliable modality for detecting emotion,” he said.

“Believe it or not, gait may be the most reliable modality for detecting emotion.”

Dr. Gu Eon Kang, assistant professor of bioengineering in the Erik Jonsson School of Engineering and Computer Science

Kang said he became interested in studying gait as a way to combine his longtime interest in psychology with engineering. His goal is to determine whether gait analysis also can be used to provide early warnings about other mental health issues such as bipolar disorder and neurodevelopmental conditions such as attention-deficit/hyperactivity disorder.

From left: Dr. Gu Eon Kang, Mehreen Dawood, Ashley T. Adams, Angeloh Stout BS’20, MS’23, Mrigank Maharana, Ian Y. Kim, Luke Fisanick and Marvin Alvarez BS’25.

Other authors of the study to detect depression and anxiety through gait analysis include several biomedical engineering students: graduate student Marvin Alvarez BS’25 and seniors Macie Kauffman, Mehreen Dawood, Ashley T. Adams, Ian Y. Kim, Mrigank Maharana and Luke Fisanick, who conducted related research through the Hobson Wildenthal Honors College Undergraduate Research Apprenticeship Program. Kaye Mabbun MS’25, Dr. Yunhui Guo, assistant professor of computer science, and Dr. Chuan-Fa Tang, assistant professor of mathematical sciences in the School of Natural Sciences and Mathematics, also contributed.

In addition to Kang, co-authors of the study to gauge emotions by studying gait include Justin MacNeal Cadenhead BS’23, MS’24; Maharana; Ashley Guzman BS’23, MS’24; Kauffman; and Katherine Brown PhD’21, assistant professor of instruction in bioengineering. The UT Dallas researchers collaborated with colleagues from McGovern Medical School at UT Health Houston.

The research was funded by the National Science Foundation (grant 2513070).