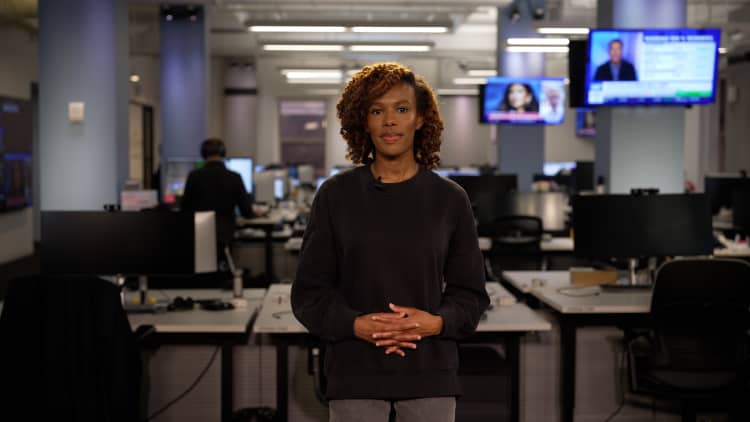

Amelia Miller’s second job is a bit uncommon.

Miller, a fellow at Harvard University’s Berkman Klein Center for Internet & Society, is also a relationship coach — specifically, one for people who’ve formed emotional connections with artificial intelligence chatbots. “Whether your chatbot acts as a therapist, assistant, lover or friend, I’m here to help you be intentional about the relationship you want — and how to build it,” her website reads.

She primarily aims to help her clients, mostly men working in tech, keep their relationships with chatbots from eroding their “capacity to connect with real people,” she says. Since launching her coaching business in June 2025, Miller has received “so much outreach,” typically from an inquiry form on her website, she says. The 29-year-old has conducted one-on-one sessions, which can be in-person in New York City or virtual, with more than a dozen clients so far, she says.

“The limit is my ability to dedicate my time and energy to it, not interest. I’m taking on as many people as I have the bandwidth for at the moment,” says Miller.

The mere existence of Miller’s second job highlights a new, still-developing dynamic in modern life: some AI users’ growing personification of the technology, their increasing daily reliance on it and what it means for their mental health and social well-being. More than 10% of U.S. adults use generative AI daily, and of them, almost 90% use it for personal reasons like emotional support and advice, according to a health research survey of more than 20,000 U.S. adults published on Jan. 21.

Among young people, AI uptake is stronger. Nearly a quarter of people in the U.S. between the ages of 18 and 21 reported using AI for mental health advice in early 2025, according to a survey study conducted by researchers from nonprofit think tank Rand, Harvard Medical School and the Brown University School of Public Health. Roughly two-thirds of those users “sought advice monthly or more often,” found the study, which surveyed 1,058 nationally representative youths aged 12 to 21.

DON’T MISS: The communication skill that can help you accelerate your career growth

Heavy use of AI chatbots could shift people’s mental health and social well-being in both positive and negative ways: potentially helping “isolated or neurodivergent” people practice social skills while eroding others’ ability to emotionally connect with humans in real life, according to an OpenAI product policy researcher’s April 2025 paper. The paper referenced two AI users who took their own lives after spending “increasing time with the companion AI and commensurately [pulling] away from human relationships in the months leading up to their suicide, indicating an over-reliance on the companion AI.”

AI companies including Anthropic, Google and OpenAI say they’ve strengthened their chatbots to have better responses to mental health crises, and that their technology shouldn’t replace professional mental health care. OpenAI, for example, added a feature to ChatGPT in August that encourages users to take breaks after using the tool for extended periods of time instead of nudging them to keep responding.

Still, mental health providers these days should ask their patients if, and how, they’re using AI chatbots in their daily lives, New York University researchers suggested in an April 1 paper that referenced the youth survey data. In Miller’s practice, two of her clients — who were in a romantic relationship with each other — were each asking ChatGPT separately for advice about their disagreements as a couple, ultimately making their fights worse, she says.

“The model would validate each of their perspectives, and then they would come back and feel much more stubborn in their view that they were right,” says Miller.

A human-AI relationship coach’s 3-part philosophy

Miller’s coaching stems largely from her experience as a social scientist and computer scientist, including a decade of research “on how technology influences intimacy,” she says. She declined to share the rates she charges clients. “My goal is to help people, and to figure out how to make it a viable business,” Miller says.

Her coaching business originated from dissertation research for her master’s degree in social science of the internet from the University of Oxford. As she studied the potential “social and ethical repercussions” of using AI companion products, she put out ads on the internet to connect with people working at companies developing AI chatbots.

Then, “people who were in relationships with chatbots started reaching out to me, just wanting to talk. And so I started taking some of those conversations,” says Miller. Initially, she saw those conversations as research. Eventually, a friend suggested that she start a coaching practice to help people who came to her for support, she says.

Miller’s coaching philosophy consists of three pillars, she says:

Artificial intimacy literacy: Teaching her clients how AI chatbots “are engineered to cultivate emotional attachments” and sharing emerging research about the consequences of engaging with chatbots that are too affirming and people-pleasing. “The goal is if they understand these mechanisms, they’ll be better able to manage their influence,” Miller says.Developing personal AI constitutions: Asking people who engage with chatbots often to reflect on how they use them, and to think about if they feel positively or negatively about their AI-use habits. She’s particularly interested in helping them consider “the ways in which the relationship with the chatbot may be affecting their human relationships,” she says.”Analog gym”: Having clients participate in exercises that, as Miller puts it, “help rebuild the social muscles that technology is atrophying.” The exercises often encourage being more vulnerable and present during real-life conversations. The idea is to help her clients feel more confident interacting with other people, she says.

Her coaching practice isn’t the only resource for people who feel they’ve become too reliant on AI chatbots. Internet and Technology Addicts Anonymous offers support for people who feel their use of AI chatbots is “compulsive and harmful.” Some mental health professionals offer treatment for patients with high levels of chatbot use, and some individuals find solace with each other through community groups on platforms like Reddit.

Miller tells her clients that she isn’t a psychologist or therapist, she says. Her work differs from what most mental health professionals provide, she adds: “We really need people who sit at these intersections of social sciences and computer sciences to step in and try to think through how we can help people be more empowered to build the relationships that they want with these tools.”

Anyone with “more serious psychological conditions” should speak with a mental health professional instead, she says.

In October, Miller added group workshops to her coaching repertoire — working with up to 70 people at a time, at four different tech-focused organizations and conferences so far, she says. She’s yet to charge money for her group workshops, and she’s working at her day job to adapt them for grade school, high school and college students, she adds.

Across all formats, “my goal is to help people be more attentive to the ways speaking to chatbots might rewire their expectations for human relationships and their desires to participate in them,” says Miller. “[I want] to help people prevent those shifts before it’s too late.”

If you’re experiencing a mental health crisis or concerning mental health symptoms, you can contact the free, confidential National Helpline for Mental Health at 1-800-662-HELP (4357). If you are having suicidal thoughts or are in distress, contact the Suicide & Crisis Lifeline at 988 for support and assistance from a trained counselor.

Want to get ahead at work? Then you need to learn how to make effective small talk. In CNBC’s new online course, How To Talk To People At Work, expert instructors share practical strategies to help you use everyday conversations to gain visibility, build meaningful relationships and accelerate your career growth. Sign up today! Use coupon code EARLYBIRD for 20% off. Offer valid from April 20, 2026 to May 4, 2026. Terms apply.

Take control of your money with CNBC Select

CNBC Select is editorially independent and may earn a commission from affiliate partners on links.